Contribution of AI intelligent computing to various fields

Architectural innovations at the new paradigm level will be combined with the hardware and software computing architectures it supports to accelerate machine self-programming and application adaptation.

This is also the aforementioned Intelligent Computing Architecture 2.0 – making machines more autonomous, making development easier, and making computing smarter.

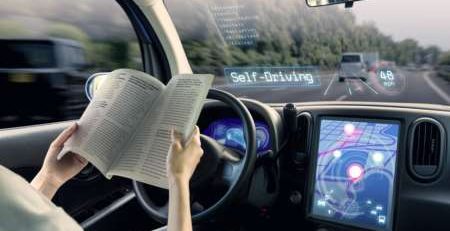

At the same time, we must also see that there are some clear trends in both hardware and software. First of all, in terms of hardware, we will see that future chips will form a unified neural computing architecture to meet the application scenarios of autonomous robots including intelligent driving; in terms of software, more and more traditional algorithms will be used. Replaced by AI algorithms and deep learning algorithms, we can replace more and more image processing with neural network algorithms, such as ISP, video codec, and even GPU applications.

Driven by these trends, for the unified computing architecture of neural networks, the vast majority of computing, storage, area and power consumption on the chip will continue to increase. Only no more than 5% of the chip area is dedicated to specific instructions. Applications and algorithms that provide services.

That is to say, only by comprehensively considering the design of software, algorithms, and hardware architecture, can the computing efficiency of the end-to-end overall computing architecture continue to evolve. It is such a concept that I have been working on chip design, software platforms, development tools and compilers for the past few years. This new architecture focuses on the latest neural network architecture design to meet the needs of autonomous driving scenarios. Its own near-memory computing system, systolic tensor array and large concurrent data bridge make it have good computing density and energy efficiency.